There’s a phrase you may have heard in tech leadership when it comes to AI, and it usually sounds reasonable the first time you hear it: ‘We’re not ready for AI yet.’

It shows up during steering committees, vendor conversations, and budget reviews. And sometimes, it’s the right call. Maybe data governance still needs work. The team may still be scoping compliance requirements. It may be that the architecture can’t support it yet.

But a lot of the time? It’s fear wearing the mask of strategy.

The problem isn’t that CIOs are being cautious; it’s when caution transforms into avoidance, and avoidance quietly becomes the AI strategy. Meanwhile, competitors who made smaller, smarter bets twelve months ago are now running laps.

Here are five ways fear-driven AI decisions are costing your product more than you think.

Summary

Fear-driven AI decisions don’t always look like fear; they show up as expanding stakeholder lists, permanent lockouts with no review dates, and experiments held to impossible standards. The CIOs moving forward are distinguishing real risk from avoidance, running small scoped bets, and building team fluency that compounds over time. The gap between those organizations and the ones still waiting isn’t just about features anymore; it’s about judgment, and it’s widening every month.

1. Confusing Risk Management with Avoidance

Not all hesitation is created equal. There’s a considerable difference between a tech leader who says ‘we’re not ready’ because the team is still scoping data governance, and one who says it simply because the idea of change management feels intimidating.

The first is strategy. The second is fear. “A lot of what I’m hearing isn’t fear of AI itself,” said Sarah Bhatia, Director of AI Product Innovation at Slingshot. “It’s fear of moving too fast and your culture not being ready.”

That discomfort is understandable. AI touches every layer of an organization, and managing such disruption isn’t trivial. But when the discomfort becomes the decision-making framework, you’re no longer practicing risk management; you’re acting avoidant.

The behavioral signals are usually visible if you know what to look for. “Some avoidant tendencies include more and more stakeholders being brought in,” said Sarah. “Or the goalpost is always moving. When a client is scared to launch, they just keep adding more and more into an MVP.” These patterns aren’t signs of rigor; they’re signs of reservation.

Real risk management gets specific. It asks: what exactly concerns us, and what would need to be true before going ahead? Avoidance doesn’t ask those questions. It just waits.

2. The Lockout That Never Gets Revisited

One of the more extreme forms of fear-driven decision-making is the blanket lockout: no AI tools, no exceptions, no timeline for review.

This policy often originates from legitimate concerns, such as data security, compliance uncertainty, or IP protection. The problem isn’t that those concerns are invalid. The problem is what happens next. Which, in most cases, is nothing.

“If a team isn’t working on a plan to transition, that probably means they’re not taking action,” said Doug Compton, Principal AI Developer at Slingshot. “If they weren’t fearful, they’d be planning it.”

And the lockout rarely comes with a built-in expiration date. “Companies get scared, do an AI lockout, but then never revisit to see if their fears have been addressed,” said Sarah. “Until they’re backed into a corner.”

A lockout without a review mechanism isn’t a policy. It’s a permanent pause disguised as caution. And by the time a competitor, a client, or a board member makes the question unavoidable, the ground has shifted substantially.

3. Every Month You Wait Is a Month Your Competitors Gain

The cost of waiting on AI rarely shows up all at once. It compounds quietly, month by month. And while other teams are building fluency, you haven’t started developing yet.

“You’d be a full year behind your competitors if they’ve already started experimenting,” said Chris Howard, CIO of Slingshot. “Or longer.”

That’s not a hypothetical. The teams that started early aren’t just ahead on features. They’ve built a fundamentally different kind of fluency. They’ve learned what doesn’t work, built confidence, and are making better, faster decisions with each new tool that enters the market.

“The people who started early with AI within our team are now a year ahead in learning and understanding how to engage with these tools,” said Sarah. “As new, more impactful tools come out, those are the people that can jump in a lot faster, because they’ve been in this space for longer.”

There’s a compounding effect that’s easy to underestimate. AI literacy builds on itself. Every project, even the failed ones, adds to your team’s institutional knowledge. The organizations that sat out that learning period aren’t just behind on output. They’re behind on judgment. And that gap doesn’t close quickly.

4. Treating a Failed Experiment Like a Failed Strategy

One of the most counterproductive patterns in fear-driven AI leadership is holding individual experiments to a pass-or-fail standard that doesn’t actually apply to early-stage learning.

A tech leader who avoids AI experimentation because they’re worried about failure is, ironically, guaranteeing a worse outcome. You can’t get to the learning without taking the risk.

“Every failure comes with learning,” said Doug. “You learned what didn’t work, you learned better ways to do it. As long as you’re doing it securely with proper guardrails, you should allow your people to experiment.”

The key qualifier matters: secure, with guardrails. This framing isn’t an argument for reckless experimentation. It’s an argument for deliberately scoped projects designed to surface learning, regardless of outcome.

“The act of going down the path of an AI project means you’re evaluating your company, evaluating your pain points, and seeing where AI can actually be applied,” said Sarah. “Even if the execution doesn’t meet your needs right now, you’ve already gone through a lot of the pain of that foundational process.”

Chris added the practical framing for how to structure those bets: “The first couple of initiatives should be small. Don’t bet the company on the first AI project, or set the team up for a spectacular failure. Start smaller: a single workflow, one internal team, something where a failed outcome is recoverable and a successful one is measurable.”

A failed experiment that teaches you about your data architecture, your team’s readiness, and where the real friction lives isn’t a failed strategy. It’s exactly how the strategy is supposed to work.

5. Letting Security Fears Replace Security Literacy

Security is the most commonly cited reason for avoiding AI tools. And in many cases, that instinct is directionally correct: not every tool belongs in every environment, and the stakes around data protection are real.

But ‘we don’t know if it’s secure’ can quickly become a permanent excuse rather than a solvable problem. The path from fear to educated decision-making is mostly education.

“Most paid tools protect your data,” said Doug. “But you need to understand what each tool actually does with it. Does it train off your inputs? Does it share data with partners? That’s the evaluation you need to do.”

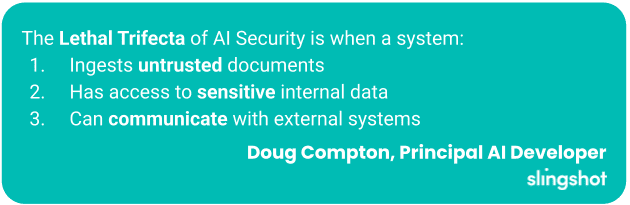

Beyond tool-specific assessment, there’s a wider framework worth internalizing. Doug described what is called the “lethal trifecta”: the most dangerous AI security scenario occurs when a system ingests untrusted documents (possibly with prompt injection), has access to sensitive internal data, and can communicate with external systems. All three together create a serious vulnerability. Break any one of those three conditions, and the risk profile changes dramatically.

Sarah offered the simplest heuristic for starting that evaluation: “If it’s free, you are the product. Always remember that.”

Understanding the actual threat model gives you a basis for real decisions. Governance policies built around knowledge can protect your product. Policies built around fear cause bottlenecks.

What the Risk-Aware CIO Does Differently

The tech leaders getting this right aren’t reckless. They’re not running every new tool through production environments. They’re protecting time and space for their teams to learn.

They’re identifying the internal champions who are already curious and energized about AI, and giving those people real problems to work on within reasonable constraints. And they’re revisiting their own assumptions at regular intervals, not just once in a policy document that never gets reopened.

“Empower your teams and protect their time to learn and play and explore,” said Sarah. “Set the example. Establish a culture where people can really get in there and see how effective these tools can be.”

“Find the champions within your organization,” Doug said. “The ones who are passionate about it and smart. Give them guardrails, and see what they can do.”

Chris grounded the approach: “It needs to be a reasonable problem, one you won’t be angry about if they fail. Something where the team can explore a little and feel comfortable doing it.”

The Real Risk Is Standing Still

Fear and risk are not the same thing. Teams can evaluate risk, scope it, and plan around it. Fear just accumulates. And the longer it sits, the heavier it gets.

Fearlessness doesn’t define the forward-leaning CIO. Clarity does. They know which concerns are real and which ones are cover. They run small experiments. They build cultures that value learning instead of perfection. And they partner with people who can help them move faster without moving blind.

If you recognize yourself somewhere in these five patterns, the first question isn’t ‘how do we do AI?’ It’s simpler: what specific concern have we been holding onto, and when did we last actually test whether it’s still valid?

The teams that keep asking that question are already moving. Those who are waiting to feel ready may be waiting longer than they think.

The Brand Risk You're Missing

Written by: Savannah Cherry

Savannah is our one-woman marketing department. She posts, writes, and creates all things Slingshot. While she may not be making software for you, she does have a minor in Computer Information Systems. We’d call her the opposite of a procrastinator: she can’t rest until all her work is done. She loves playing her switch and meal-prepping.

Expert: Chris Howard

Chris has been in the technology space for over 20 years, including being Slingshot’s CIO since 2017. He specializes in lean UX design, technology leadership, and new tech with a focus on AI. He’s currently involved in several AI-focused projects within Slingshot.

Expert: Sarah Bhatia

Sarah Bhatia brings people together. In her decade plus of product and product-adjacent experience, her focus has been on cross-functional collaboration, asking lots of questions, and getting big results. She excels at strategy development, and getting the right brains in the room to solve big problems. Sarah would describe herself as a daredevil, because she’s not afraid to ask “dumb“ questions, get smart answers, and take (calculated) risks.

Expert: Doug Compton

Born and raised in Louisville, Doug’s interest in technology started at 11 when he began writing computer games. What began as a hobby turned into his career. With broad interests that range anywhere from snorkeling, science, WWII history and real estate, Doug uses his “down time“ to create new technologies for mobile and web applications.

Frequently Asked Questions

Risk management gets specific: it names the concern, defines what would need to be true to move forward, and builds a plan around that. Avoidance doesn't ask those questions. It waits, often indefinitely, while the concern stays vague and the goalposts keep moving.

Lockouts rarely include a built-in review date, so they default to permanent. The original concern may have been valid, but without a mechanism to revisit it, the policy outlives the problem it was meant to solve and quietly becomes the AI strategy by default.

The cost compounds month over month. Teams that started experimenting a year ago aren't just ahead on output; they've built a different kind of fluency. They've learned what doesn't work, made faster decisions, and are better positioned to adopt new tools as they emerge. That gap doesn't close quickly.

A well-scoped experiment surfaces learning regardless of outcome. Even a failed project reveals something about your data architecture, team readiness, and where friction actually lives. That foundational knowledge shortens the path on every initiative that follows.

Start with the specific questions: Does this tool train on your inputs? Does it share data with third parties? From there, assess whether your use case creates what security experts call the "lethal trifecta": ingesting untrusted documents, accessing sensitive internal data, and communicating with external systems simultaneously. Break any one of those conditions and the risk profile changes significantly.