If you’re considering integrating AI tools across your organization, you’re already aware of the pressure. Move fast, stay competitive, don’t fall behind. But here’s what often gets overlooked in the rush: not all AI tools handle your data the same way. The consequences of skipping security vetting are anything but theoretical.

As AI moves deeper into proprietary data, infrastructure, and even customer interactions, the stakes are high. A misstep here doesn’t just mean a bug; it could mean leaking sensitive data, violating compliance, or even training your competitors.

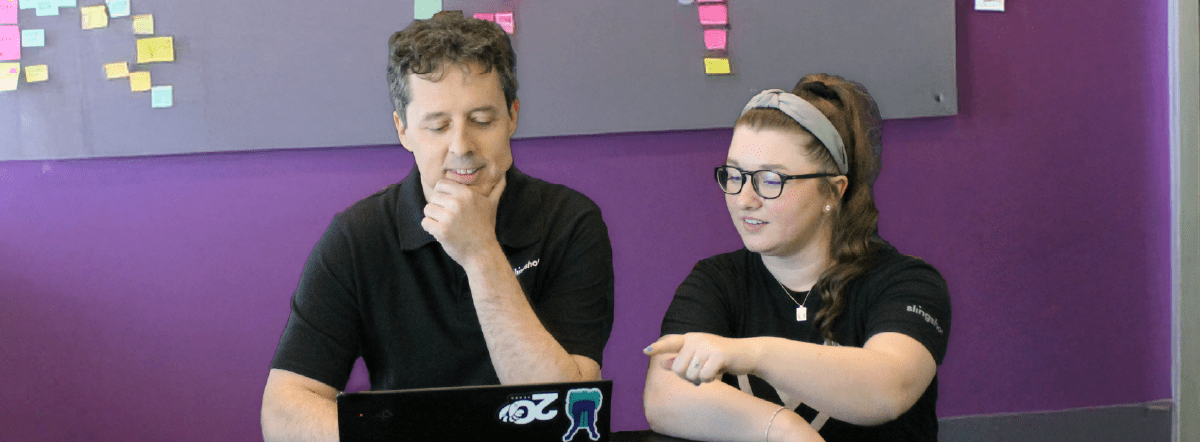

We sat down with some of our in-house experts, who’ve worked hands-on with AI tools, to explore the critical security questions you should ask before giving a new AI vendor the green light.

Summary

1. Does this tool generate secure code?

Let’s start with one of the most common use cases: developers asking AI to write code. It sounds efficient until you realize most models don’t default to secure practices.

“If you’re just asking a model to provide code, and you don’t specifically tailor your prompts to address security concerns, models will often generate code that simply does the task you asked it to do. Nothing more,” explained Steve. “That can inherently lead to security gaps”.

Unless you prompt with security in mind, the code output may not handle authentication, authorization, or input validation effectively. That means you’re still on the hook. As Doug put it, “A developer is still responsible for the code their AI writes.”

- Does the model consider secure coding practices by default?

- Can you control or audit the code generation process?

- Is there an organizational setting to enforce security constraints?

2. What does the tool do with your data?

When it comes to AI, data privacy isn’t just a box to check; It’s the center of the target. Whether it’s source code, customer records, or sensitive prompts, how the tool handles your data matters.

“One downside of these tools is that if you’re not careful, they can take your code and train on it,” said Doug. “With some free tools, that’s exactly what’s happening. Your data is the product”.

And that’s not just about inputs. Steve added, “Prompt injections aren’t going away. If your AI has access to your data, a hacker can potentially use prompt injection to extract that information.”

- Does the tool store your inputs? If so, where and for how long?

- Does it use your data to train models?

- Are there settings for ephemeral storage or auto-deletion?

3. Do they have real security certifications or just logos?

Here’s where it gets tricky. A vendor might say they’re SOC 2 compliant. But unless you’ve seen the actual audit report, you don’t really know.

“Anybody can slap a SOC 2 logo on their site,” Steve noted. “But if you’re a business partner, you can, and should, request their audit file”.

Doug reinforced the point: “SOC 2 just says they’re doing what they say they’re doing. If they say ‘we sell your data,’ SOC 2 will confirm that. It doesn’t say whether the policy is good, just whether they follow it”.

Certifications that matter:

- SOC 2: Ask for the audit report, not just the badge.

- HIPAA: A good sign for tools handling healthcare data.

- PCI-DSS: Relevant for tools touching payment information.

- Enterprise controls: Can admins enforce privacy settings org-wide?

4. What’s the risk of accidental data exposure?

One of the more subtle but dangerous risks comes from how tools handle input and output. Many companies assume prompts and results are private. Often, they’re not.

Doug noted, Prompts generally include context, which is often sensitive business logic. If your LLM is accessible externally, through an API or Chatbot, there’s not a lot you can do to keep the prompt secret.”

This access becomes especially dangerous when integrating LLMs with live systems.

“Just opening up a chatbot could open up several security holes,” said Steve. “If it has access to consumer billing information, you’d better make sure it’s locked down. A public LLM should never have access to sensitive data”.

What to audit:

- Can prompts, outputs, or uploaded files be accessed externally?

- Is data encrypted in transit and at rest?

- Does the tool support user-based access control on production?

5. Is there a difference between free and paid versions?

This answer was unanimous across the group: yes, and the difference is night and day.

Doug pointed out a common and valid concern: some free tools, including developer-focused ones, use your inputs, including code, to train their models. That means your internal work could become part of the public dataset without you realizing it.

Paid plans often come with enterprise-grade controls, private data settings, and contractual guarantees. Free tools usually don’t.

Steve expanded, “Cursor, for example, has org-level controls that prevent individual developers from going rogue. Free tools rarely offer that”.

The tradeoffs:

- Free tools may log or store your prompts.

- Paid tiers often include opt-out options, audit logs, and SLAs.

- Only enterprise plans may offer admin-level control or GDPR compliance.

If security matters, “free” will ultimately cost you more.

6. Are you responsible for what the AI does?

Let’s say it clearly: yes, you are.

In recent news, a widely publicized security fail involved a women’s safety app that accidentally exposed thousands of user records due to what was assumed to be “vibe coding” with AI.

“Vibe coding implies you’re not checking the output of the LLM,” said Doug. “It means you’re accepting whatever it gives you. That’s a huge risk”.

Whether or not the AI feels right isn’t a substitute for validation. And if you’re using AI-generated code in production without proper review, you’re building on sand.

- Assume all AI-generated code is untrusted until proven otherwise.

- Implement a human review loop for all customer-facing content.

- Build automated tests to validate outputs before deployment.

Final Thought: It’s Not About Paranoia. It’s About Due Diligence

“There’s a lot of concern about proprietary data training models,” said Steve. “But the risk isn’t just theoretical. A poorly configured LLM can give your competitor a leg up by exposing your IP.”

Even tools that feel polished, fast, and fun to use can have real blind spots. The trick is not to fear them but to vet them like any other enterprise vendor.

And yes, if you’re genuinely concerned, local hosting is back. “You can run an LLM on-prem,” Doug noted. “If you’re handling really sensitive data, it’s worth the cost”.

Want More Security Help?

Written by: Savannah Cherry

Savannah is our one-woman marketing department. She posts, writes, and creates all things Slingshot. While she may not be making software for you, she does have a minor in Computer Information Systems. We’d call her the opposite of a procrastinator: she can’t rest until all her work is done. She loves playing her switch and meal-prepping.

Expert: Doug Compton

Born and raised in Louisville, Doug’s interest in technology started at 11 when he began writing computer games. What began as a hobby turned into his career. With broad interests that range anywhere from snorkeling, science, WWII history and real estate, Doug uses his “down time“ to create new technologies for mobile and web applications.

Expert: Steve Anderson

Steve is one of our AWS certified solutions architects. Whether it’s coding, testing, deployment, support, infrastructure, or server set-up, he’s always thinking about the cloud as he builds. Steve is extremely adaptable, and can pick up the project and run with it. He’s flexible and able to fill in where needed. In his spare time, he enjoys family time, the outdoors and reading.